Dev Productivity: Why We Skipped McKinsey Metrics In The Evaluation

These augmented metrics give the wrong signals and actually hinder measuring developer productivity rather than helping it

Continuing our conversation from the previous set of articles, demand for understanding developer productivity is growing from leadership and it's not just at tech companies, but tech-enabled businesses. The fact that McKinsey put together a framework shows it's a question they need to answer. Now you might be wondering why I chose to omit it thus far, and that's the topic of today's discussion. When to know a framework is a good fit or not.

There will always be new sets of metrics hitting the market, many of the ones we've discussed are relatively new SPACE was introduced in 2021. Not every framework is worth considering, and ones that give the wrong signals can be a detriment to your success in rolling out these types of metrics. I believe McKinsey's measurements fall into this category.

McKinsey claims to augment both DORA and SPACE metrics by adding few new measurements:

Inner/Outer loop time spent - inner loop identifies core development activities, outer loop supporting tasks such as meetings.

Developer Velocity Index - benchmarking your engineering performance against industry peers

Contribution Analysis - reviewing the amount contribution to outputs

Talent Capability Score - level scoring based on industry surveys

Why They Fall Short

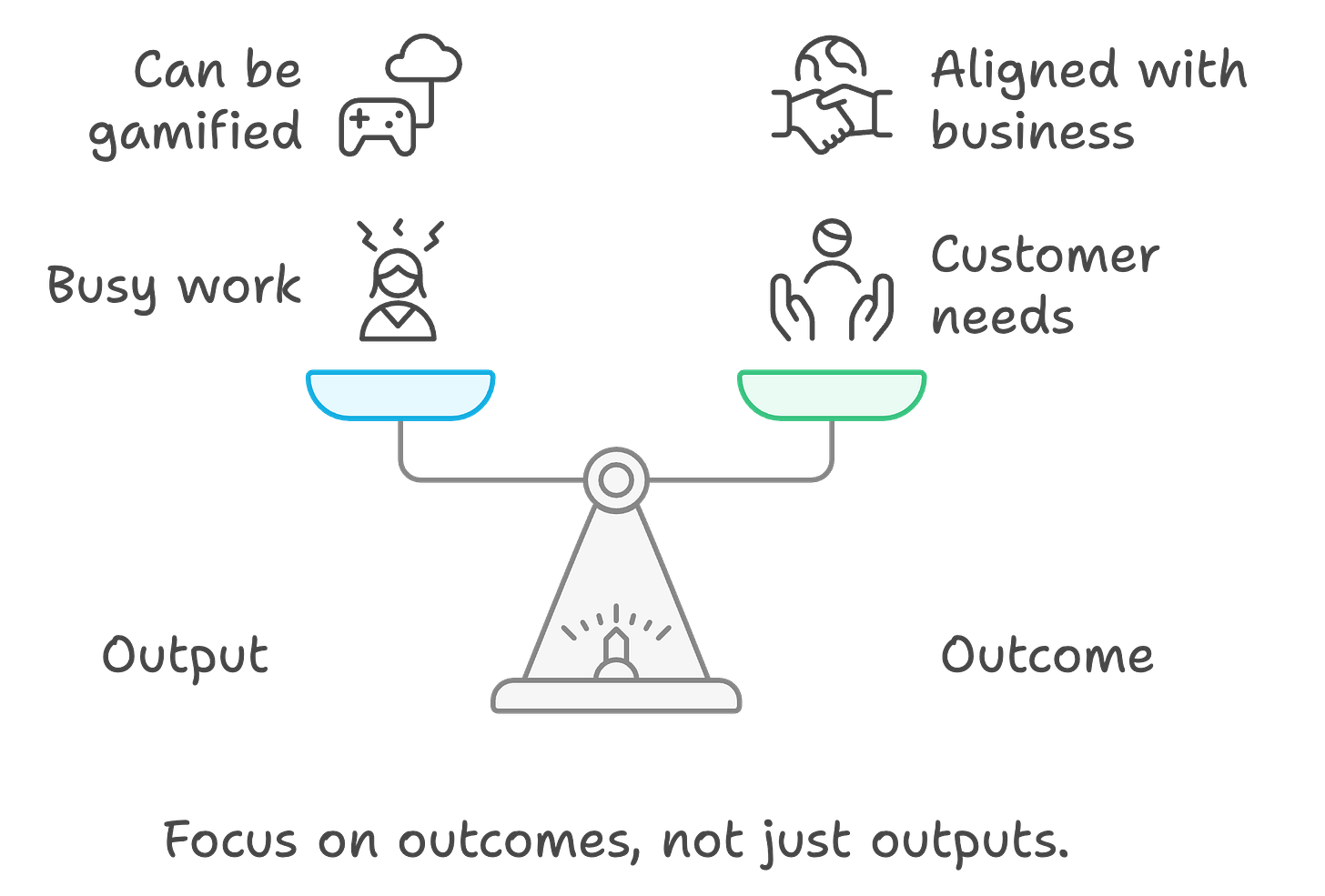

The reason I would say it falls short is primarily the focus is on output not outcomes. As we discussed in previous articles these can be gamified and aren't necessarily aligned with business goals.

You might be wondering why not put developers by customers and that that would guarantee closer outcomes - that gets into team structure and is based on the type of product your organization produces. We will dive into this space in a future article.

For the purposes of this article, let's look at why the McKinsey metrics fall short. Inner & Outer loop measurements give insights at a system level into where energy and effort are going. While I agree that developers core focus should be on development related activities, it doesn't bode well for teams that have issues for instance with collaboration. Taking this metric at face value, one might assume time for collaborative efforts, and other key areas such as security could be detrimental. Teams could do well on this metric by just focusing on development tasks, whereas customer interviews might be crucial but overlooked in an effort to do well on this metric.

Survey based indexes and scores are at consultancies referred to as high-value as customers love it. Clients get benchmarked and get insights into peers that they might not have otherwise, for the consultancy it is low effort to maintain but high value deliverable. Two of the metrics which focus on team and individual performance respectively are developer velocity and talent capability score fit into this category. I believe in this case these are low value as they can easily provide the wrong signals. Let's explore some of these issues:

It doesn't account for the activities the team's are going through - an R&D team will produce outputs at a different pace than a team which services customers regularly

These metrics focus only on output, they encourage the teams & developers to produce more code. Whether there is a valid business case for the outputs becomes less important, increasing security risks

There is nothing related to code quality, meaning issues will need to be addressed later in the cycle causing delays

These don't take into account the maturity of the team, and put pressure to increase output which can lead to disastrous results. When a team is trying to get the basics down, it's not the right time to push speed

Finally, there is contribution analysis which targets both team and individual level. This is also an output analysis, which for all the aforementioned reasons is a poor fit.

Metrics Fatigue

Realistically, there are only a limited number of metrics that teams and developers can be measured against - too many will cause fatigue. Given that only a limited number of metrics can be used, I can't see a scenario where I would recommend these over other industry metrics.

The other metrics are from industry research groups, many of the same people have been involved in DORA and SPACE. These have also interviewed thousands of organizations to put together recommendations. McKinsey's focus isn't the same in this space as those groups, so I would put more emphasis on existing frameworks.

Quantifying Engineering Value

Circling back to the conversation at the start of the article, when leadership is asking these questions the underlying issue is to understand if the investments in the department are bringing business value. For software solution providers this is an easier question to answer; once the product stops being invested in, it will eventually be deprecated as other competitors in the market will overtake.

You may have heard the saying "every business is a tech business", it highlights whether they realize it or not the business at it's core is run by technology (whether that be software systems, data integral to the business, AI, or otherwise). If we take a moment to reflect on that phrase, it also highlights that many businesses are unaware of the value of tech to their operations. Often times, these might be tech-enabled businesses where IT is seen as a cost center. The question about engineering value then is related to where the business invests capital and whether there is sufficient value. As IT in these businesses may not be the revenue generation engine, it becomes imperative to communicate the value.

I would highlight that the metrics we discussed thus far are for internal measurements and may even bring the wrong results if presented to executives without context. Your lead time will be down when the team is learning new processes to increase quality, once adopted they will improve. That's why the narrative behind the metrics is so important.

I suspect part of the reason McKinsey provides these metrics is for the simplicity for decision making on who's doing a 'good' job in their team. McKinsey after all does have a history of recommending layoffs, sometimes to the detriment of the business - the collapse of Swissair in the early 2000s is a great example.

These metrics doesn't provide the business value that might be perceived as it's coming from McKinsey. Decisions made from these metrics would lead to unintended consequences and arguably even reduce team morale if they feel their hard work is not being noticed. Do yourself a favour and skip these ones.

If you found this take intriguing, share with a friend or comment below:

For more insights into Development Productivity, subscribe to our newsletter: