Canada's GDPR Moment Is Coming. Your AI Vendor Isn't Ready.

Advocate to government and industry groups for regional, compliant infrastructure because you'll need it and it doesn't exist yet

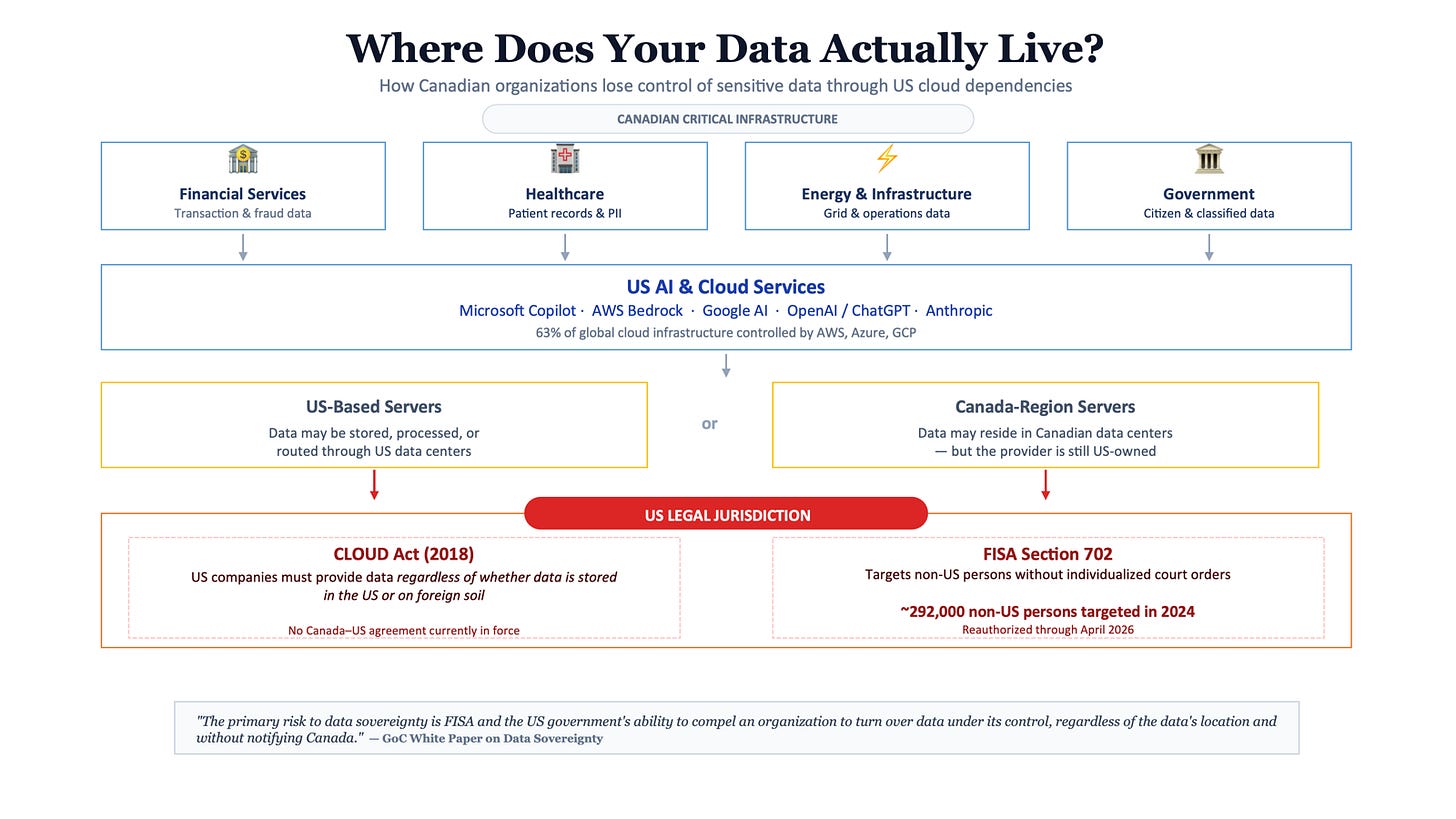

Every time a Canadian organization uses Microsoft, AWS, or Google turnkey AI services in the cloud for items such as fraud detection, that transaction data could be subpoenaed by US authorities under the Patriot Act. While most CIOs assume that their cloud contract protects them, it simply isn’t the case. In regulated industries such as banking, these aren’t risks that can be ignored.

The unpredictable nature of the US tariffs means Canada has been looking to diversify trade partners. Many of our systems are controlled by the same country economically pressuring us, we can’t continue the pattern with AI rollouts. Now that we’re diversifying our trade partnerships, Canada needs to realize that our tech infrastructure is a national security concern. Media coverage on these risks has been sparse, but these are discussions that need to be happening in board rooms now.

I’ve spent the last 15 years helping organizations adopt US cloud technologies. My experience is exactly why I’m saying it’s time we explored a different path.

How we got here

Planning and maintain your own servers has an overhead, with cloud service providers it became cheaper and easier than building your own data centers. With serverless and similar functionality, time to market for product development reduced significantly.

This can be deceiving. At first it’s cheaper to run serverless when you don’t have many users on the platform, over time the cost of every call adds up. Usage-based billing can bring some unexpected surprises.

The more businesses migrated from Infrastructure-as-a-service to Platform and Software as a service models, those conveniences came with additional risks. Services such as M365 and Google Workspaces have been adopted used almost universally in Canada.

While these services make it easier to adopting services and publish your workloads, there aren’t guarantees for those concerned with data sovereignty. US servers are subject to US law enforcement requests.

AI systems introduce another level of issues.

Concerns with AI adoption

With AI systems specifically the popular LLMs out right now, there’s several concerns:

Internal teams adopting 3rd party systems and sharing too much information. In regulated industry such as financial services it’s a nightmare as restricted and confidential data might be accidentally shared. Data lineage also becomes difficult when systems aren’t transparent.

The AI systems storing shared information for future training. OpenAI did accidentally leak past queries on google, they were searchable by anyone.

Lack of trust from a consumer side as these systems lack transparency and obviously aren’t deterministic in their output.

The difference with AI systems (specifically LLMs) is how people seem to adopt them and what they share. Traditional line of business applications have limited scope. With AI applications, team members can share PII and restricted data.

We should acknowledge that AI systems also have significant influence over shaping individual’s world views. Often time these systems initially replace traditional search, people only understand what the system portrays. It’s not surprising that 53% of consumers distrust AI-powered search results and 70% are concerned AI generated content will be used to deceive them.

These systems will share insights based on the biases introduced in training and we need to acknowledge that. It’s imperative that we understand the geopolitical goals across the globe, what’s working and what isn’t.

Current AI Geopolitics

US is pursuing the LLM rollout as the race to the moon, the goal is for the winner to take all the users, their data and becomes the defacto AGI solution. OpenAI, Google, and Anthropic are in a $100B capital race. The business model is to get users dependent on their system, then increase prices once switching costs are high. It’s the cloud & streaming playbook repeated.

Concerning is the fact that the US has laissez-faire attitude towards regulation. There’s little incentive to change as AI stocks dominate the US stock markets. In fact the Magnificent 7 stocks which are all related to AI, represented 84% and 73% of S&P 500s total return in 2023 and 2024 respectively. Keep in mind, these companies AI products have yet to make a sustainable operating cash flow.

These solutions are decidedly not open-sourced, because the goal is control and power over users. Side note: it’s funny how US LLM leaders are asking for regulation, so that when the crash happens they can blame someone else.

China has taken a very different approach, they are approaching LLMs with a manufacturing lens. It means a lot of models of different sizes, price points, and prepared for specific use cases. These models are often a better value than what the US has to offer. It’s also why they seem to be far more aggressive with open-source, granted they aren’t not fully open weights but tend to be in the right direction.

While China’s approach is strategically smarter than the US winner-take-all race, it does need to be addressed these models are subject to CCP content restrictions. If we are looking towards a world where middle powers work together, having a single entity whether American or Chinese determining the reasonable responses doesn’t work.

The EU focus has been on ethical adoption and introducing regulations that minimize risk while enabling innovation. EU’s AI Act classifies systems into 4 categories of risk: minimal, limited, high risk, and unacceptable. Examples of unacceptable risks would include predictive policing, social scoring, and realtime facial recognition in public spaces as these systems might have undue biases. High Risk applications include but aren’t limited to autonomous vehicles, law enforcement, etc.

High Risk systems require auditability, conformity, accountability, pre-market and post-market or once deployed monitoring. These rules apply to developers or providers, deployers of the system, and distributors. In order not to stifle innovation, EU has adopted a sandboxed approach to rollouts where both regulators and providers/deployers can work together to move projects forward.

It should be noted while the AIDA act in Canada did not get passed last year, it is expected to return and will likely align with EU AI Act risk profiles and some form of sandboxing.

Canada has two primary approaches to AI adoption: CIFAR focusing on policy and strategy, and a supercluster ScaleAI with a focus on adoption and commercialization of AI across Canadian supply chains and value chains. So what have we Canadians got for our approximately $340 million investment? It’s really the last 2 years where vast majority projects have been greenlit.

The criticism remains with superclusters is the focus isn’t to own data and IP, rather those get farmed out to large tech businesses with the goal increasing adoption. Strategic IP exits the country because ecosystem of opportunities is better in the south. On top of that these clusters are regionally focus, which means regional politics plays a bigger part than the importance of a national rollout.

We need to focus on developing strategic IP within Canada, and ensuring that the right data means it stays here. The dependency on US AI systems by Canadian enterprises is significant, we’re not adopting homegrown options. Let’s explore why we should be concerned about US dependency.

Canadian dependence on US AI

In terms of AI, it isn’t just shadow IT that you need to be concerned about. Even security solutions such as Microsoft Sentinel have AI services that can’t guarantee that your data wouldn’t be housed in US, in which case US Cloud and Patriot Act apply. For Canadian critical infrastructure such as finance and energy, this is a non-starter. OSFI B-10 cyber risks for federally regulated financial institutions has guidelines on tech and cyber risks which these solutions don’t meet.

US trained systems won’t align with Canadian values, in fact they will be projecting American priorities globally. It starts to make sense why China has built an ecosystem that can compete and offer services to their citizens.

Additionally, when US companies control the AI models your team uses, they control what answers get prioritized, what data gets collected, and who can access it. We have a competitive intelligence problem.

The fact that we have an ongoing trade war, and are now competing in several industries with the Americans globally means we need to have ownership on our systems. ScaleAI was supposed to help us here, but there mandate is incomplete.

How Canada’s approach needs to change

There’s also a gap in the market with current AI solutions being rolled out - security and privacy are byproducts not designed, local values and voices are subdued for those with global footprint.

I believe there’s an opportunity to create something unique that embodies the best of China’s and EU’s AI approaches. Canada should borrow China’s model diversity strategy (many specialized models vs. one AGI) and EU’s risk-based regulation (strict rules for high-stakes, freedom for experimentation). We should double on our Canadian advantage, abundant clean energy for training. The pitch is simple Sustainable AI with lower carbon footprint and democratic governance.

ScaleAI’s focus on commercialization more than driving innovation needs to change. The focus needs to change to meet Canada changing status in the global economy. Innovation doesn’t come for large scale businesses looking to just introduce operational efficiencies.

As training data is the lifeline of any of these systems. I propose we adopt a regional Canadian data center approach, where local values are built from ground up. Scale AI should pivot from helping big companies optimize to building shared infrastructure such as compute clusters, labeled datasets, evaluation frameworks. These would let Canadian startups compete without Silicon Valley funding. Anyone looking to represent Canadian values, would need to adopt our model and input any refinements here. Give access to startups to build tools and services for Canadians on top of this shared data system.

If Canada builds sovereign AI infrastructure that works, we create an export: a playbook for middle powers who want AI independence without choosing between US and Chinese tech. Australia, EU members, and Nordic countries all face the same dilemma.

Canada has a 24 month window before AI infrastructure consolidates around US and Chinese standards.

Here’s what needs to happen now:

Mandate data sovereignty for critical infrastructure

Redirect supercluster funding to shared compute

Fast-track regulatory sandbox for AI testing

The choice isn’t whether to compete - it’s whether to compete on our terms.

What can you do today

I’ve given a lot of food for thought in this article, what can leaders do today?

First of all, petition the government to support development of local infrastructure and data. We have the land, some of the cheapest and green sources of electricity. It is entirely possible to build here and meet the necessary regulations.

For financial services and other heavily regulated industries, adopt the EU AI Act risk identification mechanism internally. Use this when evaluating your AI strategy. It’s worth noting as Canadian businesses if any of your AI system is used in the EU, the AI Act does apply.

Identify which of your applications and workloads are on external systems and what data is being shared. Finally, speed up your internal adoption of Zero Trust Architecture. If your system is built around minimizing your risk footprint, it means when others are stressing about fines and not meeting requirements your systems will already have these features baked in.

We help Canadian organizations build AI strategies that are sovereign, compliant, and future-proof, hello@blubyte.io