AI's supply chain risk Canadians can't afford to ignore

It’s time we had a discussion on the risks of software supply chains related to AI adoption. Over the past few weeks there have been several scenarios that should give any Canadian leader in a regulated environment pause and thought on re-evaluating processes.

In February 2026, Anthropic refused the Pentagon’s demand for unrestricted military use of Claude, citing risks around mass surveillance and autonomous weapons. Defense Secretary Pete Hegseth gave CEO Dario Amodei until February 27 to comply. Anthropic held its position. The Trump administration responded by designating Anthropic a supply chain risk and ordering federal agencies to phase out its products within six months. Days later, OpenAI announced its own Pentagon deal.

Proponents of self-governance would rejoice that leaders at Anthropic made the right decision, but for the fact that they went back to negotiations earlier this month. Self governance and values always give way to shareholder, board, and business demands.

Meanwhile, TD Bank rolled out its AI strategy built on Microsoft’s stack. GitHub Copilot for engineering, generative AI assistants across contact centres and branches, and a proprietary model called AI Prism trained on bank datasets. TD’s stated goal is $1 billion in AI-generated value by 2026. The risk with technical supply chains to AI services and offerings is significantly higher than past systems.

These are systems people believe outputs even with hallucinations. And Microsoft itself disclosed that 31 companies across 14 industries had already been targeted through AI recommendation poisoning -- hidden instructions embedded in “Summarize with AI” features that manipulate chatbot memory and bias future outputs. Microsoft identified over 50 unique poisoning prompts over a 60-day window, with off-the-shelf tools making the technique trivially reproducible.

I’ve seen firsthand how these decisions are made in executive meetings, pick the approved vendor and lowest friction path; take their products because adoption will be easier. There’s little chance that TD looked at a product like Cohere that is targeted towards enterprise AI and would reduce the risk of any data sovereignty issues that might arise from Microsoft sending data to US servers.

Finally, Roey Eliyahu, CEO and co-founder of Salt Security, has come out stating the increased risk from orchestration for multi-agent architectures. This includes over-privileged access, integrations to sensitive data, and the increased auditing necessary.

Why It Matters

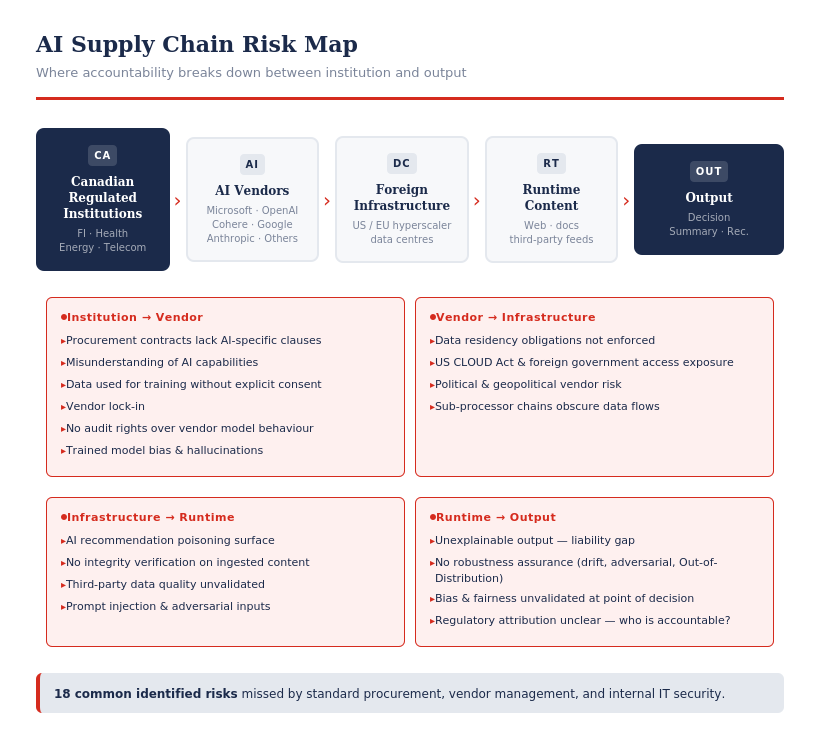

While these might look like separate incidents from AI governance to procurement, and cybersecurity risks, they share a root cause: AI systems increase technical supply chain risks and businesses need to plan for these.

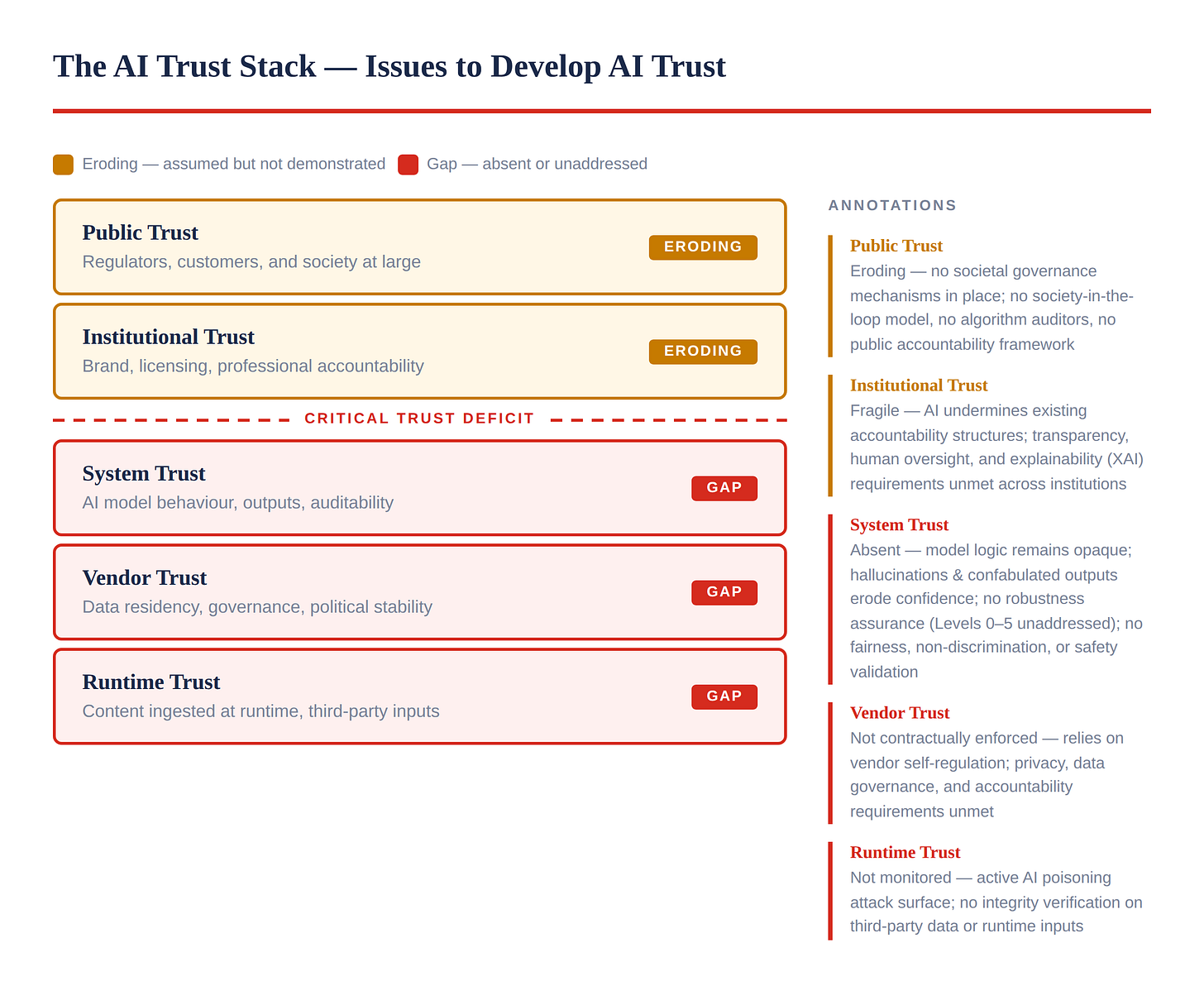

Anthropic’s refusal looked principled, until you remember it had already signed a $200 million Pentagon contract in July 2025 with only two self-imposed restrictions. The company drew its own red lines, and the moment a government pushed back, the entire relationship collapsed. Self-governance gave Anthropic the right to say no. It also gave the US government the right to blacklist them. Neither outcome produced a stable, trustworthy framework for critical infrastructure.

For Canadian financial institutions, the lesson is direct. TD built its AI ambitions on Microsoft’s platform. OSFI’s Guideline B-13 requires federally regulated institutions to manage technology and cyber risk across their third-party relationships including data residency, supply chain integrity, and operational resilience. Yet the question of where AI-processed data resides, who controls the model logic, and what happens when a US vendor’s political environment shifts remains unanswered in most FI vendor contracts.

The AI recommendation poisoning findings make this worse. If an FI deploys AI summarization tools across wealth management portals or internal knowledge bases, the content those tools ingest at runtime can be weaponized by external actors. OSFI B-13’s third-party risk management requirements should cover this exposure, but most institutions have not yet mapped AI summarization features against their risk frameworks.

In Energy and Transportation sectors where edge IoT devices are common, and AI built products will continue to rollout your operational technology needs to reduce blast radius should a device be compromised.

Additionally, Canadian regulated industries such as banking, transportation, and energy need to view agent orchestration security as a first-order procurement criterion, not an afterthought. The attack surface transferred to the institution through vendor-hosted agent networks is real and often unquantified in contracts.

Canada’s AI governance gap is well documented. AIDA failed to advance. OSFI and the Global Risk Institute formed the Financial Industry Forum on AI, which produced the voluntary EDGE Principles. Voluntary principles without enforcement mechanisms repeat the same pattern the Partnership on AI demonstrated - high-level commitments with no monitoring, no accountability, and members that act against the stated principles when commercial incentives demand it.

What to Do

Start with the EU AI Act risk levels. Canada stalled on AIDA. The EU didn’t. Their risk categorization — from minimal to unacceptable — gives you a working model today. Map your AI supply chain against it honestly. Some vendors you’re currently using for high-risk applications won’t survive that review. That’s the point. Not every AI company is built for the regulatory and accountability demands of critical infrastructure, and finding that out at procurement is far better than finding it out after a breach or a regulatory finding.

Zero Trust is no longer a roadmap item. I’ve watched too many organizations treat Zero Trust as a future-state aspiration while AI deployments are already live in production. That sequencing is backwards and dangerous. Agentic architectures, multi-model systems, and vendor-hosted AI all assume implicit trust that doesn’t exist. Every day you delay is another day your AI stack is running on security assumptions designed for a threat model that no longer applies.

Procurement is your most underused risk lever. The geopolitical environment has changed. What a vendor commits to today can collapse under political or commercial pressure tomorrow — Anthropic proved that in a single news cycle. Your contracts need to reflect that reality: data residency, model governance rights, exit provisions, and explicit clauses covering what happens when a vendor’s operating environment shifts. Business as usual in procurement is not a neutral choice right now. It’s a decision to absorb risk you haven’t priced.

If this raised questions you’re sitting with that’s exactly what we’re here for. Subscribe for analysis every 10 days. No noise, just discernment.

Connect on LinkedIn: https://www.linkedin.com/in/gurindersmann/

Work with us: hello@blubyte.io